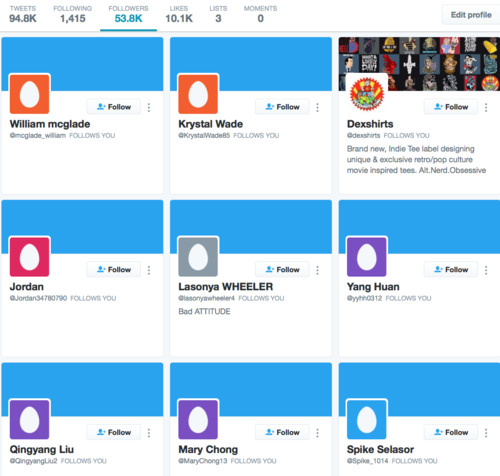

The picture below is a screenshot of my last nine followers on Twitter, as of about 10 minutes ago. You'll note that they are all "eggs" – that is, accounts that lack a personal avatar and instead carry the default Twitter icon, an egg.

But if you were to look more closely at those accounts, you'd see that's not the only thing they have in common. Apart from the mercifully-real Dexshirts, they each follow exactly 21 other accounts. And those accounts are largely the same ones – Richard Branson, Elon Musk, The Spinoff, Jacinda Ardern, David Farrier, Ateed, the Herald, Auckland Council, Metro and others, with the odd change-up, like YouTube star Yousef Saleh Erakat. The follows have been added in a different order for each account, but they stop at 21.

I have 53,000 Twitter followers and I tend to appear in Twitter's "you might want to follow these people" box – there's a feedback loop there – and I'm thus at the level where, especially in the context of the local Twittersphere, I tend to attract fake follower accounts. It's not really a great use of my time to do anything about it – it's hard to curate your followers on Twitter (unlike Facebook, relationships are not reciprocal) and many of them are culled by Twitter within a week anyway, in response to spam complaints.

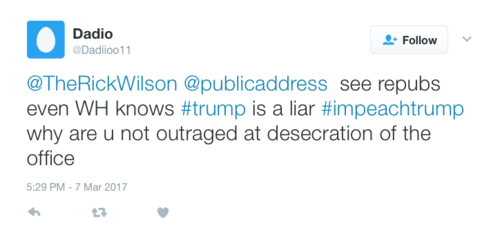

But what's happening right now seems different. Since I started writing this, another account that follows exactly 21 other accounts has followed me. It's much more than the usual level of activity. I only paid attention to all this because one of my recent followers replied to a retweet of mine:

I've seen this before, of course. It's exactly the pattern of pro-Trump bots and shitposters. In a recent post, I quoted this paragraph from Carole Cadwalladr's Observer story about the hard right's use of data science and social media automation to shape opinion:

Sam Woolley of the Oxford Internet Institute’s computational propaganda institute tells me that one third of all traffic on Twitter before the EU referendum was automated “bots” – accounts that are programmed to look like people, to act like people, and to change the conversation, to make topics trend. And they were all for Leave. Before the US election, they were five-to-one in favour of Trump – many of them Russian. Last week they have been in action in the Stoke byelection – Russian bots, organised by who? – attacking Paul Nuttall.

I can't say if these are Russian bots. And, indeed, it would be a mistake to assume they are. They could simply be chasing and provoking controversy, as a means to currency for some other purpose. But Sam Wolley's piece for The Atlantic from November, with his research partner Douglas Guilbeault, is worth a look. They say:

Our team monitors political-bot activity around the world. We have data on politicians, government agencies, hacking collectives, and militaries using bots to disseminate lies, attack people, and cloud conversation. The widespread use of political bots solidifies polarization among citizens. Research has revealed social-media users’ tendency to engage with people like them, a concept known as homophily by social scientists. In the book Connected, Nicholas Christakis and James Fowler suggest that social media invites the emergence of homophily. Social media networks concretize what is seen in offline social networks, as well—birds of a feather flock together. This segregation often leads to citizens only consuming news that strengthens the ideology of them and their peers.

In this year’s presidential election, the size, strategy, and potential effects of social automation are unprecedented—never have we seen such an all-out bot war. In the final debate, Trump and Clinton readily condemned Russia for attempting to influence the election via cyber attacks, but neither candidate has mentioned the millions of bots that work to manipulate public opinion on their behalf. Our team has found bots in support of both Trump and Clinton that harness and augment echo chambers online. One pro-Trump bot, @amrightnow, has more than 33,000 followers and spams Twitter with anti-Clinton conspiracy theories. It generated 1,200 posts during the final debate. Its competitor, the recently spawned @loserDonldTrump, retweets all mentions of @realDonaldTrump that include the word loser—producing more than 2,000 tweets a day. These bots represent a tiny fraction of the millions of politicized software programs working to manipulate the democratic process behind the scenes.

Bots also silence people and groups who might otherwise have a stake in a conversation. At the same time they make some users seem more popular, they make others less likely to speak. This spiral of silence results in less discussion and diversity in politics. Moreover, bots used to attack journalists might cause them to stop reporting on important issues because they fear retribution and harassment.

So anti-Trump (or at least pro-Clinton) bots aren't new, but they're not what I've typically seen in the past six months. The bots or paid human trolls who have interacted with me have been almost exclusively pro-Trump. Some of them are definitely human: one responded with telling (and amusing) indignation to my customary greeting, "How's the weather in St Petersburg?", demanding to know whether I was racist against Russians. You blew your cover there, dude.

But whatever it is, it feels like something is going on. And the fact that that something includes a ramping-up of anti-Trump provocations makes it even more intriguing. Is this a new entrant to the mass-manipulation game? Or is the same old network of automated emotional dividers recalibrating for a new zeitgeist?