Hard News: Gower Speaks

206 Responses

First ←Older Page 1 2 3 4 5 … 9 Newer→ Last

-

Hebe,

Good questions Russell. Good on Patrick Gower for fronting up -- a sight more democratic than much of our political process.

And of course Russell had to break that embargo -- happy birthday PG.

-

Stats 101:

Not much is happening in these polls (statistically). But it never seems to slow down the narratives. This cartoon nails it:

Hover over that and it adds "and also all financial analysis..." and they should add "and all political polls when the methodology section gives margins of error based on simple random samples".

When you compare two polling periods (say NZF 4.9% this time vs last poll or some different organization's poll) you don't just see if they are within the confidence interval of one another. You multiple the margin of error by 1.414 (yes the square root of 2 -- which is the simplified approximation because this is only Stats 101) because you are comparing two percentages both of which have uncertainty. But that's just the beginning of the trail of compounding errors in the way things are analyzed.

You need to base your comparison on the ESS (effective sample size) not the usual 1000 they report. The ESS will be smaller because of several factors including: non response (the biggie!), weighting (which introduces error variance itself), and design effects.

Overall response rates for NZ polls have been dropping over the decades (like newspaper readership). As a researcher you are as responsible for those you did not survey as those you managed to reach. Response rates should always be made available, not only the overall one (probably below 50%) but also for each question. How misleading things can be by omitting non response has already been pointed out. And Pete George is correct in noting that non response swamps sample frame bias to do with telephone access. Two decades ago (yikes, two decades) I worked through some estimation of the level of sample frame bias when using telephone surveying in NZ (versus other methodologies like face to face). Back then mobile phones weren't the issue -- using telephone based surveying and getting research with quality specs was the issue.

Wyllie, A., Black, S., Zhang, J.F. & Casswell, S. (1994). Sample Frame Bias in Telephone Based Research in New Zealand, New Zealand Statistician, 29, 40- 53.

As is usual, technology has changed everything but really changed nothing. Now the question has simply shifted to the sample frame bias associated with not including mobile phones. If the market research companies are up to the mark they will have included questions in their face to face surveys to find out about landline vs mobile use and can do the calculations if they choose to.

I've also done simulation work (long ago also) which considers levels of non response and what effect it has on the true confidence limits for your percentages. The results are so depressing that such appropriate widening of the confidence limits just never gets used.

I thought the new standards for reporting and the MediaWatch segment might get a little bit further than it did. I remain disappointed.

-

Those graphs on the reid site are fascinating, and I really don’t want to start up with the ‘OMG bias’ stuff again, but it’s damn shame the info in them so very rarely seems to make it into the mainstream narratives.

As an example, the big to do over Cunliffe’s ‘leafy suburbs’ comment.

Key dismissed a report on poverty, and Cunliffe said that showed how out of touch he is and that he needs to get out of his flash house more. This was presented, by everyone pretty much, as confirmation of the trend of Cunliffe being Sir Gaffe-a-lot, and a hypocrite, and so on.

But look at the numbers on “Is out of touch with ordinary people”.

Key: about 52%

Cunliffe: about 22%A thirty point gap, on the actual point Cunliffe made. Not discussed at all as far as I remember, & certainly not being brought up as an example of anything a poll is showing. And yet look, there it is.

And ‘talks down to people’. From what we hear, Cunliffe is arrogant and aloof. And yet, and yet. There is data about what the perception is. Sitting there on a website no one knew about.

I’m not alleging political bias, and I freely admit that those examples are cherry picked, but there is a lot of info that isn't getting out, some of it which is hard to reconcile with the narrative pundits tell us is derived from ‘perception being reality’.

There is something going on, not something deliberate, not something sinister. But something.

-

Andrew Geddis, in reply to

So John Key and National are 'masking' their donations by using restaurants and other events, where participants are making donations or paying over the top for services.

No. They aren't doing any such thing. I explained the whole thing here: http://www.pundit.co.nz/content/one-of-these-things-actually-isnt-quite-like-the-other

-

Andrew Geddis, in reply to

“Our poll shows National is (highly) likely to have 56 to 58 MPs.”

Not hard.

But to be fully "accurate" it would have to be something like "our poll shows National is (highly) likely to have 56 to 58 MPs, Labour is (highly) likely to have X to X+2, the Greens are likely to have Y to Y+2, NZ First is about equally likely to have either no MPs or Z MPs ... and if it has no MPs, then National, Labour and the Greens will get some more because of the wasted vote ... while the Maori Party might create a parliamentary overhang again or might only win the one seat, and ACT might get an MP or might not depending on Epsom ... and goodness knows what National is planning for Colin Craig, which could give him N to N+1 seats or none at all."

Yep. That's a story they'll want to run.

-

Sacha, in reply to

the info in them so very rarely seems to make it into the mainstream narratives

who makes those decisions?

and who benefits? -

Sacha, in reply to

Yep. That's a story they'll want to run.

The underpants gnomes will not permit it.

-

Alistair McBride, in reply to

If the journos just used the language the public would get used to it very quickly. All the poll figures do is show a possible trend. The graphs they use need the band width which shows the trend even more clearly instead of the succession of "spikes" they currently portray.

-

Thanks for this, it's great stuff. This is the first time I've seen Gower provide a reasonable explanation for what usually strikes me as risible propaganda when I'm watching it.

-

James Green, in reply to

I’d just like to paraphrase Steve’s post.

For us to be able to confident that there is a real change in support between two polls, it would need to move by more than 4.3%*

The correct narrative is “Our poll was not adequately powered to detect any changes”.

To quote Andrew, “Yep. That’s a story they’ll want to run.”*Assumes that both polls have a sample of 1000, giving a margin of error of 3.1, multiplied by 1.41, as outlined by Steve above. At the five percent threshold, a movement of 1.9% is required, and 2.6% at ten percent support.

-

Russell Brown, in reply to

Yep. That’s a story they’ll want to run.

Yes, well illustrated.

It also brings us back to Monday's point about the folly of drawing up virtual houses of Parliament at this point. But that's a hard sell.

-

It is worth remembering that the reason for our vigilance regarding political polling in advance of this election was the obvious bias in the way almost all of the mainstream media reported the polls prior to the last election. And the research organisations’ own body even confirmed this after the election itself. Please don’t let them get away with it again and thanks for holding them to account this time around. If the MSM deny this they should agree to run the results including the undecideds every time.

-

Andrew Robertson, in reply to

This was an excellent comment!

I agree a) that non-response is a far, far bigger issue than non-coverage in telephone surveys, and b) survey reports should include the response rate.

The problem is though, I've seen some very dodgy response rate formulas used in New Zealand by reputable companies. What the industry needs is an accepted formula and standard set of call outcome codes (like the American Association of Public Opinion Polling has - http://www.aapor.org/Response_Rates_An_Overview1.htm#.UzvMLvmSySo). When the industry has that, I'd argue that publishing response rates should become mandatory. Until then, readers won't be able to compare like for like. The report that includes the more conservative calculation will just get slated by those who don't know the difference between the formulas.

The other thing to mention is that you can have a very non-representative survey that achieves a very high response rate, and a very representative survey that achieves a very low response rate. Response rate is not the only indicator of sample quality.

In surveys I've designed, I've occasionally sacrificed response rate to reduce response bias. As an example, consider the following two hypothetical surveys about same-sex marriage.

1) A random paper-based postal survey achieves a 45% response rate through the use of reminders, a second questionnaire sent to non-respondents, and a prize draw incentive.

2) A random telephone survey, introduced as a survey about ‘current issues’, achieves a 25% response rate.

In this instance the telephone survey (assuming good fieldwork practices) is likely to deliver a higher quality sample. In a postal survey potential respondents are able to see all the questions before they decide to take part. Although the initial mail-out would be random, people who feel strongly about the issue are more likely to respond. For this reason the postal survey sample will not be as representative as the telephone survey sample.

Disclaimer: I work for Colmar Brunton, but my views don't represent those of the company, etc, etc, etc

-

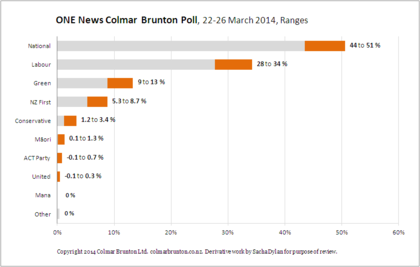

Pete George, in reply to

This is what you want?

National 45.9% +/- 3.1%

Labour 31.2% +/- 2.9%

Greens 11.2% +/- 2.0%

NZ First 4.9% +/- 1.3%

Maori 1.5% +/- 0.8%

Mana 1.1% +/- 0.6%

United Future 0.1% +/- 0.2%

Act 1.1% +/- 0.6%

Conservative 1.1% +/- 0.8%

Internet 0.4% +/- 0.4% -

I was also critical of what I called 3 News' "lazy" habit of featuring John Key as commentary talent in their stories, sometimes to the extent of using the Prime Minister to explain the angle of the stories. Gower said they've also had complaints about the frequent use of Russel Norman in a similar context.

"It's something we always have to keep watching. We always have to go and get comment, you always want a counter-comment. And, for want of a better description, you get guys having free hits.

"It's one of the true weaknesses of television that in order to achieve balance you have a counter-soundbite. So we have to watch how much we use Key, we have to watch how much we use Norman or Cunliffe, we have to watch free hits full stop. [But] we also have to have the opposite person in there."

I'll grant Gower that, as far as he goes which is both too much and not enough.

Sure, Cameron Brewer screeching for Len Brown's head on a pike every time he farts in public is "balance" but where's the news value? And yes, the Prime Minister of whatever day gets a lot more media attention by virtue of the office. It was true of Helen Clark -- who, IMO, sometimes got herself in trouble by compulsively having an opinion on everything, even when she really shouldn't have. It's going to be true of whoever the next Labour Prime Minister will be, and Key's successor isn't going to like it one little bit.

I guess the Reader's Digest version is this: Do your soundbites -- all of them -- have actual nutritional value, or are they just empty calories filling the space?

-

Bart Janssen, in reply to

Yep. That’s a story they’ll want to run.

And yet that is exactly the story New Zealand needs to hear to understand that MMP is actually working as intended and not just being steamrolled by National.

It IS a balanced situation where your vote counts - NOT what is being reported.

-

Graeme Edgeler, in reply to

Fair enough. And they could say "given the margin of error, at 4.9% in our poll, New Zealand First effectively has a 50-50 chance of getting back into Parliament".

I have it at 46% :-)

-

At the risk of cross-threading, a side-note in the polls is how education looks to be at the top of the list this election.

Parata's oblivious blithering isn't doing the Nats any favours.

But NZ needs articulate passionate voices (like Jollissa :)) to explain how National's innocuous-sounding managerial approach - all efficiencies, and 'outcomes' and 'world-class' - is dangerously wrong-headed, at best. -

Russel reported that Paddy said: "David Cunliffe was found to be using a trust to conceal donations to his campaign for the .............. Do you think David Cunliffe’s actions were worthy of a man who wants to be Prime Minister?

To be fair the Survey could have said: " Mr Key concealed the fact that his charity game of golf was actually a fund raiser for the National Party and he concealed a second game in the same way. Do you think John Key's action were worthy of a man who is Prime Minister?"

Balanced questions Paddy?

-

-

Russell Brown, in reply to

To be fair the Survey could have said: ” Mr Key concealed the fact that his charity game of golf was actually a fund raiser for the National Party and he concealed a second game in the same way. Do you think John Key’s action were worthy of a man who is Prime Minister?”

Balanced questions Paddy?

The wording was fair, as is the wording of the Judith Collins question also asked but not yet aired. I don't know what the wording of the Key/Oravida question is, but the deadline for questions might have been before Gower's Shanghai office scoop.

-

Bart Janssen, in reply to

I'd argue that such a graph is a clearer version of the data than any of the numbers given in the news.

-

Graeme Edgeler, in reply to

So John Key and National are ‘masking’ their donations by using restaurants and other events, where participants are making donations or paying over the top for services.

Its seems a clear strategy to get around the election donor law

Not even close. The way it was structured increased transparency.

-

I didn't read the entire previous discussion, but did any of the historical analyses of polling account for the 5% threshold?

If Winston polls 4.9% then I would pick NZF to be in, based on how many people I heard last time saying they might throw him a vote to nudge him over. Possibly they learned something by how big a nudge he got, but there will be new suckers who hate to see other people disenfranchised.

My main gripe is that you can't run your numbers as if the public were ignorant of the 5% line. That's like ignoring the electoral college in the US presidential elections.

-

I read the replies by Patrick Gower in the post above. To me, they have all the believability and all the sincerity of a Rebekah Brooks solemnly promising she won't do it again.

Post your response…

This topic is closed.