Computerised image recognition is on the one hand a technology so advanced as to be "indistinguishable from magic" and on the other so far short of what our human brains do from infancy as to be almost banal.

Either way, it's a vital tool for Google, which has developed neural networks – sets of algorithims – that can be trained on a corpus of existing images to recognise what's in a picture on the internet. Thing is, it's impossible to ever look at Google image recognition in quite the same way since two of the company's engineers published a post called Inceptionism: Going Deeper into Neural Networks on the Google Research Blog.

The post basically explains what they've designed their software to do:

We train an artificial neural network by showing it millions of training examples and gradually adjusting the network parameters until it gives the classifications we want. The network typically consists of 10-30 stacked layers of artificial neurons. Each image is fed into the input layer, which then talks to the next layer, until eventually the “output” layer is reached. The network’s “answer” comes from this final output layer.

One of the challenges of neural networks is understanding what exactly goes on at each layer. We know that after training, each layer progressively extracts higher and higher-level features of the image, until the final layer essentially makes a decision on what the image shows. For example, the first layer maybe looks for edges or corners. Intermediate layers interpret the basic features to look for overall shapes or components, like a door or a leaf. The final few layers assemble those into complete interpretations—these neurons activate in response to very complex things such as entire buildings or trees.

But their neural network also does things they didn't quite expect:

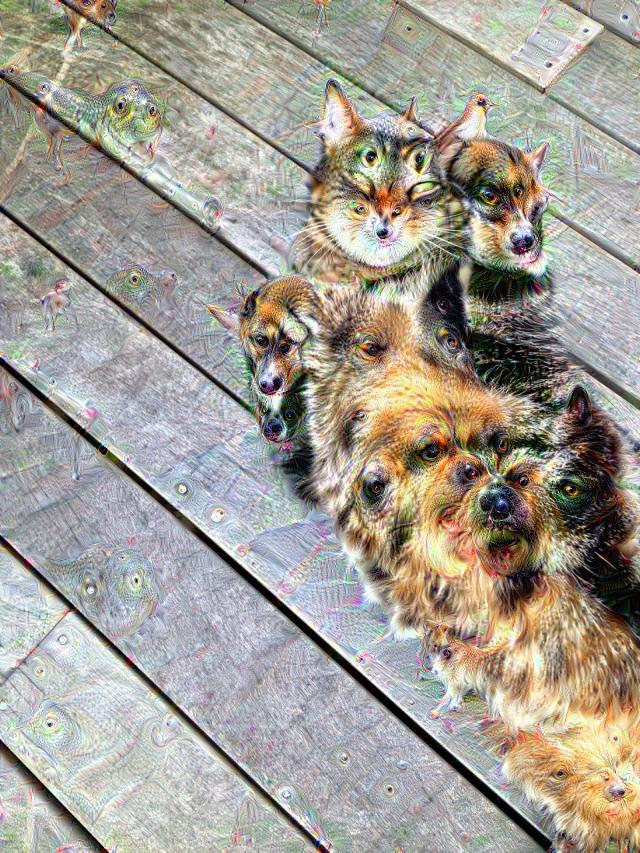

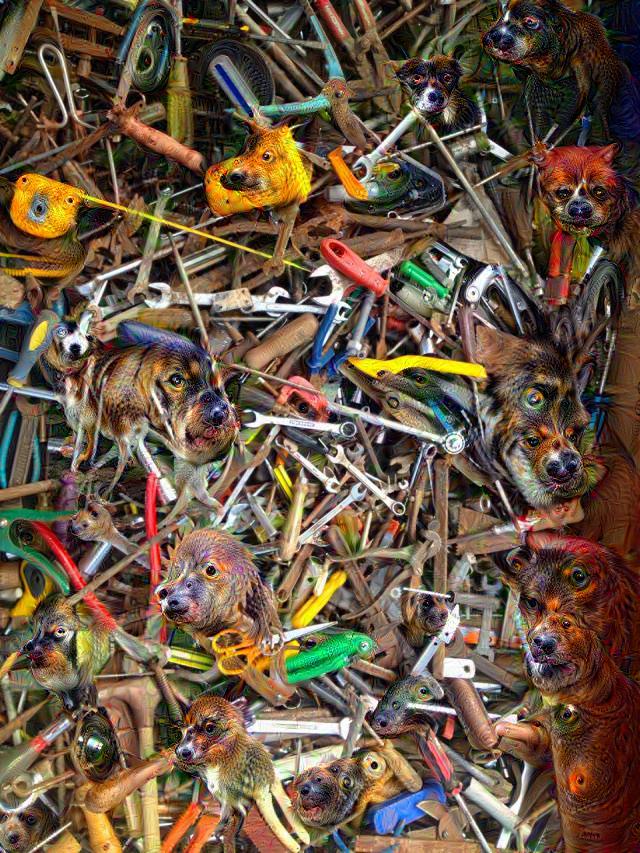

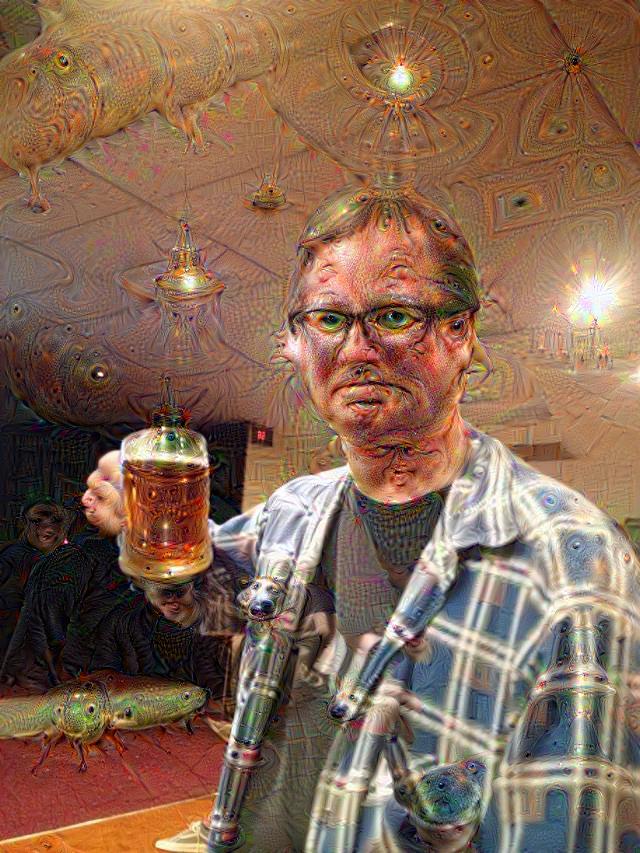

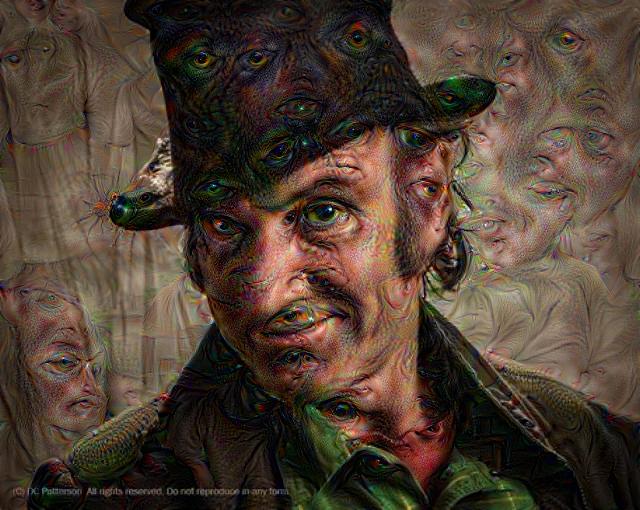

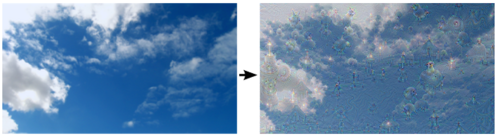

So here’s one surprise: neural networks that were trained to discriminate between different kinds of images have quite a bit of the information needed to generate images too ... If we choose higher-level layers, which identify more sophisticated features in images, complex features or even whole objects tend to emerge. Again, we just start with an existing image and give it to our neural net. We ask the network: “Whatever you see there, I want more of it!” This creates a feedback loop: if a cloud looks a little bit like a bird, the network will make it look more like a bird. This in turn will make the network recognize the bird even more strongly on the next pass and so forth, until a highly detailed bird appears, seemingly out of nowhere.

Sounds cool, huh? Google thought it was so cool that it released the code as Deep Dream, in a format that would allow anyone with the requisite software skills to to run it themselves. If you've seen any Deep Dream images, you may have thought "that computer is on drugs". There's a reason for that:

If Deep Dream looks psychedelic, it's because the computer is undergoing something that humans experience during hallucinations, in which our brains are free to follow the impulse of any recognizable imagery and exaggerate it in a self-reinforcing loop. Think of a psychedelic experience, where "you are free to explore all the sorts of internal high-level hypotheses or predictions about what might have caused sensory input," Karl Friston, professor neuroscience at University College London, told Motherboard.

Deep Dream sometimes appears to follow particular rules; these aren't quite random, but rather a result of the source material. Dogs appear so often likely because there were a preponderance of dogs in the initial batch of imagery, and thus the program is quick to recognize a "dog." This reinforces Deep Dream as not just a piece of technology but as a discrete visual style, the same as Impressionism, Surrealism, or the geometric abstraction of Piet Mondrian. Just as Mondrian's work was printed on pants or the iconic Solo Jazz Cup pattern reproduced on T-shirts, Deep Dream imagery will eventually enter into the vernacular. It will no longer be strange, but instantly recognizable.

You may have wondered what Deep Dream would make of the images of what must be considered another part of the vernacular: pornography. Vice's Motherboard channel was quick to answer that question (warning: NSFW, obviously) and, to save you a click, it's every bit as weird as you might have thought.

Most people have contented themselves with Deep Dreaming pictures of pets, people and places. And the results are trippy. They really do look like what you might fancy you see on hallucinogenic drugs. I've posted below some of the images I ran through the Deep Dream interface at Psychic VR Lab (there's another one here).

You're warmly invited to post your own Deep Dream images in the discussion for this post (just click "Choose file" under the commenting window – you can save each one then edit your comment and add another two images per comment if you like). Do bear in mind that the Deep Dream process isn't instant – there's a queue at Psychic VR Lab and it will take a day or two to process, so you'll need to bookmark each confirmation page and come back to it to see if your image is done.